EchoXP

Visual Studio Code:

React Native

Typescript

Expo

September 2024 - April 2025

(8 months)

High-fidelity mobile app prototype

Chatbot system with OpenAI GPT-4

Sensor data collection

User testing

Comparative study results and analysis

Final research report

Individual Project - Final year project

Role

Product Designer

Full Stack Developer

Deliverables

Project type

Duration

Tools

A chatbot-based mobile application for collecting real-time experiences using conversational AI and sensor data

2025

OVERVIEW

Introduction

EchoXP: AI-Enhanced Data Collection for Healthcare Research

EchoXP is a chatbot-based mobile application that reimagines how we collect subjective health data. Developed as my final year university project, it explores whether conversational AI can make data collection more engaging and effective than traditional surveys. Using chronic musculoskeletal pain as a case study, the app integrates GPT-4 powered conversations with real-time sensor tracking to capture richer and more contextual information about patient experiences.

Through a comparative study with 12 participants, EchoXP demonstrated that conversational interfaces can elicit longer and more detailed responses while reducing user frustration, therefore, opening new possibilities for mobile health research and continuous patient monitoring.

The Process

Project Objectives

The following objectives address both the technical challenge of developing a chatbot-based mobile application and the empirical question of whether it improves user engagement. Focusing on chronic musculoskeletal pain as a case study, this research contributes to healthcare while developing methodologies applicable to other conditions with subjective experiences.

To investigate the effects of implementing a chatbot system on the user engagement of the mobile application

To conduct a comparative user testing that evaluates the user engagement of a chatbot mobile application against a traditional survey approach

RESEARCH

The Problem

1. Chronic musculoskeletal pain

20% of adults worldwide are affected by chronic pain

20.4% (50 million) of adults in the US have chronic pain

61% of adults in Europe are less able or unable to work outside their homes

The complex nature of chronic musculoskeletal pain presents challenges for healthcare. Unlike acute pain, which is caused by a specific disease or injury, chronic pain can occur independently of any clear physical cause and is often more complex to manage. This prolonged pain is a global health issue, affecting a substantial portion of the population and imposing a heavy burden on individuals and society. Therefore, it is necessary to consider using technology to automatically detect these experiences.

2. Limitations in traditional data collection methods

Retrospective pain reports are subject to recall bias (influenced by peak intensity and recency)

Pain experiences are contextual and dynamic, varying throughout the day based on activities and environmental factors

Paper diaries show extremely poor compliance rates (11% actual compliance vs. 94% for electronic diaries)

Current data collection methods fail to capture the complex, fluctuating nature of chronic pain

This significant gap highlights the challenges in maintaining consistent user engagement with traditional data collection methods and calls into question the validity of data collected through such approaches.

3. Lack of user engagement in existing health applications

Health app retention rates drop dramatically (30% after 30 days, 10% after 90 days)

Pain tracking applications show an even faster decline in engagement

Progressive disengagement negatively impacts data quality and completeness

Despite smartphones being ideal platforms for ecological momentary assessments (EMA), current solutions fail to maintain long-term user engagement.

Review of Existing Work

Review of Existing EMA Applications

Existing EMA applications approach data collection differently.

ilumivu offers a highly customizable research infrastructure for longitudinal studies with adaptive sampling techniques.

AbleTo SelfCare+ takes a consumer-oriented approach, integrating evidence-based CBT tools with mood tracking for mental health support.

m-Path bridges therapy and daily life through blended care, featuring mobile sensing capabilities and gamification to enhance user engagement and motivation through customizable rewards and achievement systems.

Review of User Studies on Mobile App Design Features for Improving User Engagement

Wei et al. (2020) conducted a systematic review of 35 studies examining mHealth app engagement features across diverse user populations (ages 14-74). The research identified five key design features that enhance engagement: personalization, reinforcement, communication, navigation, and interface aesthetics. While the study didn't explicitly examine chatbots, these identified features, particularly personalization, communication, and interface design, are directly applicable to my project and crucial for maintaining consistent user engagement in health-related data collection applications.

Reinforcement

Personalization

Navigation

Communication

Interface Aesthetics

Review of Large Language Models (LLMs) and Conversational AI

Large Language Models (LLMs) like OpenAI's GPT-4, Anthropic's Claude 3.7 Sonnet, Meta's LlaMA 4 Scout, and Google's Gemini 2.5 Pro have transformed chatbot capabilities through multi-stage training processes, including pre-training on vast text data and fine-tuning with techniques like Reinforcement Learning from Human Feedback (RLHF). Modern transformer-based architectures enable more human-like, contextually aware conversations compared to traditional rule-based chatbots.

OpenAI's GPT-4 was selected for this project due to its straightforward API integration, strong context-handling capabilities, precise prompt engineering support, and demonstrated high performance in producing relevant, coherent, and natural responses. These are essential qualities for healthcare applications requiring empathy and appropriate emotional support.

DESIGN & DEVELOPMENT

Requirements Analysis

Defines project scope:

Establishes what the application must do (functional) and how well it must perform (non-functional)

Guides development:

Provides clear specifications for building the chatbot, sensor integration, database, and user authentication features

Ensures completeness:

Lists all necessary features, including chatbot conversation flow, emotion analysis, IMU sensor data collection, and data storage protocols

Provides testing criteria:

Creates measurable benchmarks to verify the application meets all specified requirements before user testing

Documents stakeholder needs:

Translates research objectives into concrete technical specifications that address both user experience and data collection goals

System Architecture

The application implements a unidirectional data flow:

User interactions and sensor readings are captured on the client device

Data is processed locally for immediate feedback and temporary storage

Processed data is sent to the API tier for validation and enrichment

Validated data is stored in the Supabase PostgreSQL database

Confirmations and necessary responses are returned to the client

This flow ensures data integrity while maintaining a responsive user experience.

Chatbot Implementation

The chatbot that I decided to name as "Ekko" was implemented using OpenAI's GPT-4 API with a text-to-text interface, selected for its balance of response quality, API integration ease, and real-time conversation capabilities. Through prompt engineering, the system was configured to maintain a friendly personality while following a structured flow of 15 predefined questions, creating natural transitions by referencing previous user responses.

The implementation includes emotion analysis functionality that extracts and quantifies seven positive emotional states (joy, satisfaction, excitement, enthusiasm, pleasure, happiness, contentment) from user responses with intensity scores from 0-5. This hybrid approach balances structured data collection with natural conversational flow, making the experience feel less like a survey and more like a thoughtful conversation.

Code snippet of prompting the GPT-4 model to brief the chatbot “Ekko”

Sensor Data Collection

The application integrates accelerometer and gyroscope sensors via React Native's Expo APIs to capture linear acceleration and rotational movements across three dimensions (x, y, z)

Sensor data collection activates automatically upon user login and continues throughout the session, with readings processed and stored in 20-second batches to balance data quality with system performance

Performance optimizations include dedicated background thread processing, buffered storage to reduce I/O operations, adaptive sampling that adjusts based on device constraints, and efficient JSON formatting to minimize storage and transmission overhead

This approach ensures comprehensive movement tracking without compromising the application's responsiveness during user interactions.

UI Design - Key Features

EchoXP's UI follows a user-centered design approach prioritizing simplicity, consistency, and engagement through minimalist aesthetics and React Native Paper components

The interface features anonymous authentication using nicknames and recovery codes, intuitive tab-based navigation across Home, Chat, and Settings screens, and distinct visual designs for user versus chatbot messages with timestamps and avatars

Accessibility features include high-contrast text, responsive typography, clear interactive feedback, and comprehensive error handling with helpful instructions for issues like network failures or authentication errors

The chatbot "Ekko" is personified with a friendly robot avatar and empathetic personality to foster emotional engagement, while information is progressively disclosed to prevent cognitive overload and maintain a natural conversation flow

EchoXP: Login

EchoXP: Home

EchoXP - Demo Video

Code snippet of the System Prompt for the GPT-4 model to extract positive emotion labels and assign the intensity

EchoXP: Chat

EchoXP: Sign out

This demonstration video showcases the complete EchoXP user experience, from registration through conversational interaction with Ekko to completion of all 15 food preference questions. The walkthrough illustrates the chatbot's natural dialogue flow, sensor data collection in action, and the seamless user interface designed to maximize engagement while gathering detailed qualitative and quantitative data.

USER TESTING

Testing Objectives

Primary Objectives

To quantitatively measure and compare user engagement levels between the EchoXP chatbot application and a traditional Qualtrics survey using the validated User Engagement Scale- Short Form (UES-SF) across its four dimensions (Focused Attention, Perceived Usability, Aesthetic Appeal, and Reward)

To investigate the effects of user engagement gathered through both interfaces, specifically measuring response length, and consistency

To evaluate the effectiveness of integrating sensor data collection with self-reported information within the chatbot interface, examining both the technical feasibility and the contextual value

Secondary Objectives

To identify potential usability challenges in both interfaces that might influence engagement

To gather qualitative feedback from participants regarding their experience with each interface to inform future refinements and development

Testing Methodology

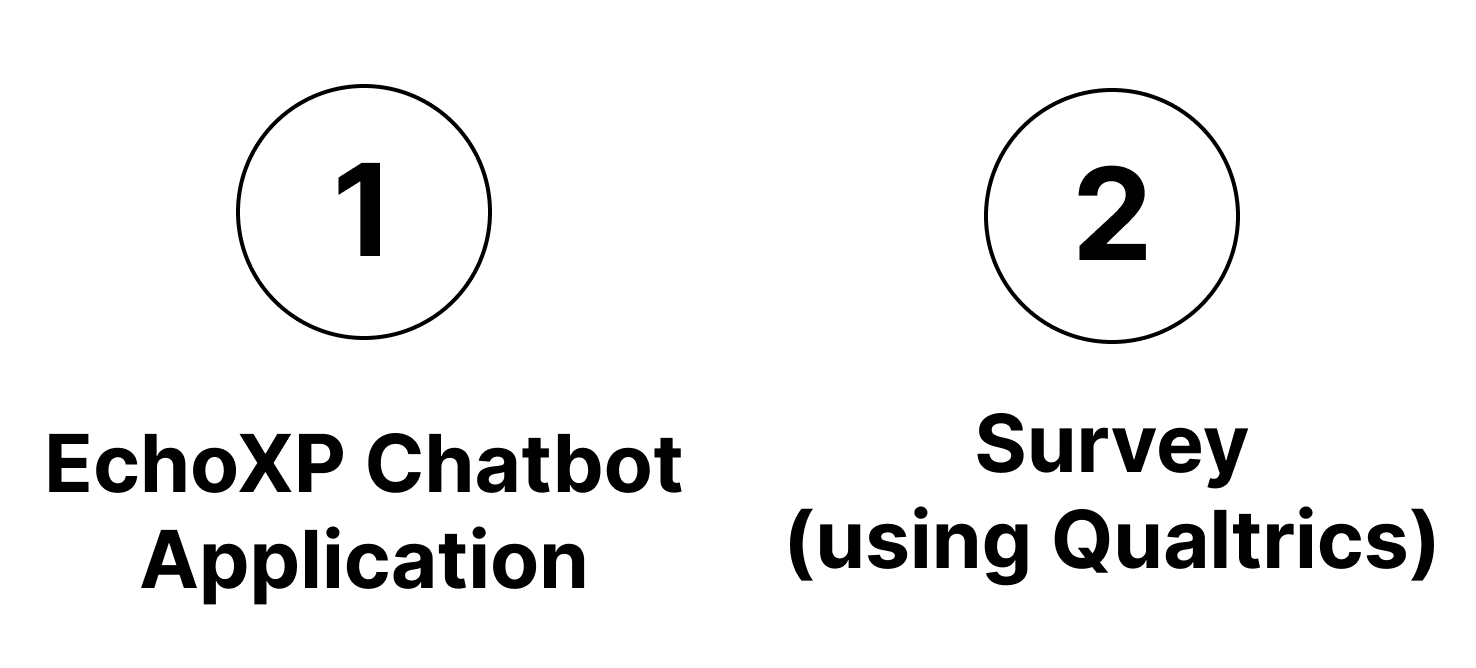

The test employed two methods:

Following each testing method, participants completed the Experience Evaluation Questionnaire that is based on the standards of the User Engagement Scale- Short Form (UES-SF).

—> Participant Recruitment

Sample size = 12 undergraduate students

Each participant were randomly assigned to one of the two testing methods

None had prior experience with both testing methods

Each participants were assigned a unique nickname for anonymisation

—> Scenario & Protocols

Scenario: Food Preferences (favourite food)

Universally relatable

Does not require sharing sensitive information

Avoids evoking negative emotions

Protocols:

Briefed participants about the study purpose and procedure

Obtain their written consent (Information & Consent Form)

Participants were assigned to either the chatbot group or the survey group

After answering all of the questions, the participant immediately filled out the Experience Evaluation Questionnaire

Concluded each session with a brief interview about factors that influenced their engagement ratings in the Experience Evaluation Questionnaire and how they felt about their assigned testing method

—> Qualtrics Survey

Similar aesthetics to EchoXP

Shows the question one by one

—> User Engagement Short-Form (UES-SF)

The Experience Evaluation Questionnaire is based on the User Engagement Short-Form (UES-SF) standards for measuring engagement

RESULTS & ANALYSIS

UES Analysis

Graph 1:

Chatbot group rated significantly higher in Perceived Usability (M=4.56) than survey group (M=4.17)

Users found the chatbot interface more intuitive and less frustrating

Focused Attention scores slightly lower for chatbot (M=2.78) than survey (M=2.94)

Aesthetic Appeal slightly lower for chatbot (M=3.06) than survey (M=3.28)

Reward scores is similar (chatbot: M=3.28, survey: M=3.22)

Overall Engagement is similar (chatbot: M=3.42, survey: M=3.40)

Graph 2:

Survey group rated higher on:

"I lost myself in this experience" (FA.1)

"The time I spent doing this activity just slipped away" (FA.2)

"The format of this activity was attractive" (AE.1)

"This activity appealed to my senses" (AE.3)

Graph 3:

Strong negative correlation between Focused Attention and Reward (r=-0.58)

Higher levels of absorption are associated with lower perceived reward

Strong positive correlations between Overall Engagement and:

Perceived Usability (r=0.68)

Aesthetic Appeal (r=0.68)

Reward (r=0.66)

Weak negative correlation between Focused Attention and Overall Engagement (r=-0.11)

Moderate positive correlation between Perceived Usability and Reward (r=0.26)

More usable interfaces are perceived as more rewarding

Comparison of Response Lengths

Message Activity & Sensory Activity

Post-Test Interview

Graph 4:

Chatbot elicited longer responses (median ≈ 8.5 words) than survey (median ≈ 6.5 words)

Wider variation in chatbot response lengths, showing greater range of expression

Maximum average word count for chatbot (16.5 words) exceeded survey maximum (9.5 words)

Conversational interface naturally encouraged more elaborate responses

Interesting pattern at lower end: chatbot minimum (2 words) vs. survey minimum (4.5 words)

Chatbot accommodated both detailed and brief responses, reflecting natural conversation patterns

Response length varied based on specific questions and participant engagement level

Suggests chatbot provides more flexible expression options than traditional surveys

Graph 5:

Substantial variation in response length throughout conversations

Some participants (Dolphin and Pudding) produced notably longer responses at specific points

More detailed responses occurred at intervals, likely for questions that naturally elicited elaboration

Sensor activity generally remained stable (0.8-1.2 magnitude range) with occasional spikes

Physical movement and messaging behaviour relationship varies by individual

Different interaction patterns among participants in the same group (chatbot):

some showed longer engagement (Pudding), others completed questions early on (Brownbread, Trumpet)

Response Length vs. Emotional Expression

Graph 6:

Response lengths ranged from brief replies to approximately 40 words

Emotion intensity measured on a 0-5 scale across seven positive emotional states (joy, satisfaction, excitement, enthusiasm, pleasure, happiness, and contentment)

Moderate positive correlation (r=0.36) between word count and emotional intensity

Longer responses tend to contain somewhat higher levels of expressed emotion

Some shorter responses still conveyed high emotional intensity (e.g., Pudding's 10-word response exceeded the intensity of 4)

Some longer responses exhibited relatively low emotional scores

Some individuals showed consistent emotional expression regardless of message length

Relationship is not strictly linear across all users, suggesting individualized patterns

Participants tried to “stress test” the chatbot by asking back questions

Chatbot demonstrated flexibility by responding to unexpected queries

Multiple participants described chatbot's tone as "overly nice" and too eager to please

Excessive empathetic personality perceived as unnatural

Consistent pleasantness felt artificial compared to human conversation

Human conversation typically contains more variation in tone and formality

Despite engagement success, future versions need more balanced conversational style

Need more authentic interaction patterns that better mimic natural conversation

Balancing structure with conversational authenticity remains a key design challenge

DISCUSSION

Discussion & Implications

Chatbot interface elicited longer, more detailed responses than traditional surveys

Conversational AI demonstrated ability to collect richer qualitative data

Integration of sensor data with self-reports provides a more holistic understanding of chronic pain

Potential for machine learning applications using combined data:

Predictive models for pain levels

Sentiment analysis capabilities

Activity classification from sensor data

Algorithms to help anticipate pain fluctuations

Limitations

Participants attempted to test system boundaries beyond the intended conversation flow

The computer science student demographic may have approached the chatbot more critically

Technical constraints emerged, including inconsistent sensor data collection

Fixed conversation flow and limited context awareness sometimes created disjointed interactions

Future needs: more adaptive conversations with better context awareness

Ethical Considerations

Future development requires balanced question design and authentic conversational capabilities

Health contexts demand a balance between data collection, empathy, and user sensitivity

Security concerns: data handling by LLM providers needs careful consideration

Need for stronger anonymization techniques and transparent data retention policies

Healthcare data regulation compliance is crucial for trust and protection

Success requires interdisciplinary collaboration: HCI, psychology, pain management, ML, data security

CONCLUSION

In Conclusion..

The study successfully met primary objectives of investigating chatbot impact on user engagement

EchoXP demonstrated moderate success in enhancing user interactions

Participants produced longer and more detailed responses with chatbot interface

Differences between interfaces were nuanced, not as initially hypothesized

Comparative testing revealed promising insights about multimodal data collection

Integration of conversational and sensor data provided important contextual support for self reports

Conversational AI showed potential for capturing more extensive health data in sensitive domains

Multimodal approach creates new pathways for more effective pain monitoring

Project serves as a stepping stone toward more effective, user-centered health solutions

Future solutions should continue to balance robust data collection with engaging user experience